The 1% Advantage: How Marginal Gains Win in Ad Tech

| Pathlabs Marketing |

| March 25, 2026 |

A performance dashboard lights up green. Spend is tracking. Conversions are coming in. But inside the team, no one can say which optimization actually drove the result.

That is the real challenge of scaled marketing: activity grows faster than understanding. When teams cannot explain what caused lift, they cannot improve performance consistently over time.

At scale, growth does not come from one breakthrough idea. It comes from small, verified gains in measurement, experimentation, and decision-making that compound.

Why Does Scaling Ad Spend Weaken Performance Signals?

Scaled growth breaks when spend increases faster than measurement quality. Teams launch more campaigns, touch more audiences, and make more changes, but they become less certain about what actually caused results to move.

Nearly three-quarters of performance marketers are already experiencing diminishing returns on social ad spend, with more than 30% of total budget producing declining incremental impact rather than new growth.

Diminishing returns are not just a media problem. They are a measurement problem. Audience saturation, bid pressure, and channel overlap make it harder to separate real lift from noise. That is where marginal gains either start compounding or disappear.

What Do Marginal Gains Actually Look Like In High-Performing Marketing Teams?

Marginal gains show up as repeatable improvements in how teams define success, interpret results, and act on evidence.

High-performing teams make marginal gains by getting a few basics exactly right:

They align on one primary business metric.

They design tests to answer causal questions, not just report movement.

They use shared definitions, so teams spend less time debating numbers and more time making decisions.

For example, an agency scaling paid media for a regional home services client may see Meta driving a high volume of leads at an efficient cost per lead. But when the team looks at qualified appointments and lift-focused measurement, it finds that one audience segment is capturing people already likely to convert, while another produces fewer leads but more booked jobs.

That insight may only shift 5% to 10% of budget and change which creative gets prioritized, but over time, those small corrections improve lead quality, reduce wasted spend, and make performance gains easier to repeat.

Why Does Optimization Break Down Without Better Measurement at Scale?

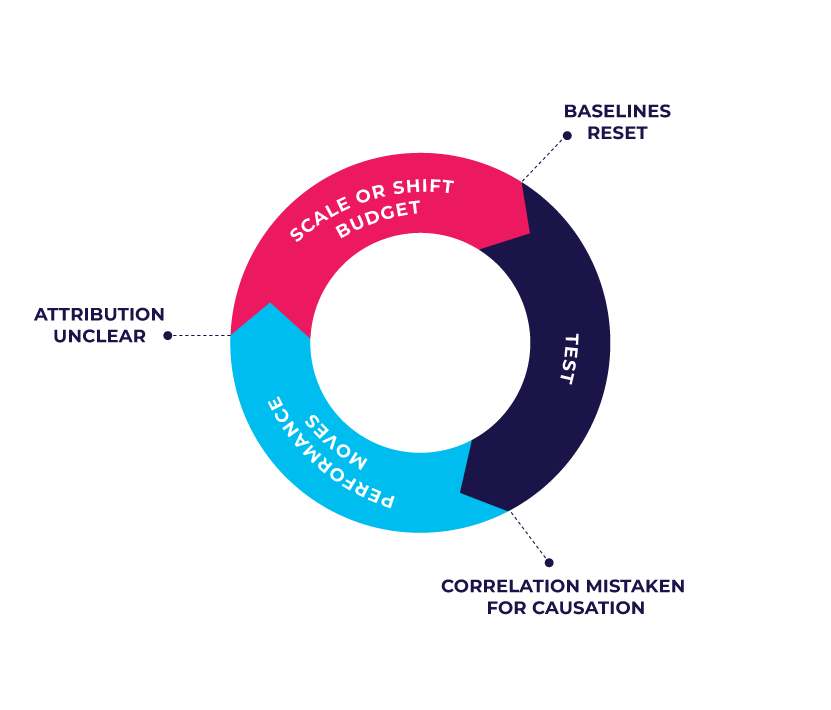

Optimization activity increases at scale, but learning often does not.

Too many variables move at once, platform reporting gets treated as proof, and budgets are reallocated before results settle. Teams stay busy, but the learning does not stick.

That is why measurement becomes the bottleneck. As spend grows, so does complexity. More channels overlap, more campaigns influence the same customer journey, and more outside factors shape results. The measurement approach that worked at lower spend levels no longer yields clear answers.

At that point, teams may keep making optimizations, but they cannot tell which changes improved performance and which only added noise.

At scale, growth depends on more than constant optimization. It depends on whether teams can identify what created lift, retain that learning, and apply it again.

What Changes When Marketing Teams Measure For Causality?

When teams measure causality, marginal gains become reliable and reusable.

Teams design experiments to isolate incremental impact, not just dashboard movement. They account for overlap, seasonality, and outside variables before making budget decisions. That makes results easier to trust and useful beyond a single campaign.

With causality in place, experimentation stops being reactive and starts becoming cumulative.

This takes more than better analytics. It takes clear ownership of measurement, testing, and follow-through.

Pathlabs' Media Execution Partnership supports that model by embedding measurement expertise directly into a client’s marketing operation. Instead of handing over reports after the fact, the team helps run experiments, validate lift, and turn results into day-to-day budget and execution decisions.

The Next Step For Teams Trying To Compound Marginal Gains At Scale

At scale, the advantage does not come from doing more. It comes from learning faster and keeping what you learn.

That only happens when measurement, experimentation, and prioritization work as one system. Independent measurement reduces platform bias. Better experiments reveal real lift. Clear priorities keep teams focused on what actually improves performance.

Over time, those small verified gains stack up. And the teams that can compound them build an advantage that is difficult for slower, noisier organizations to match.

The real advantage is not one big optimization. It is a system that keeps producing small, verified improvements over time. The teams that build that system will outperform teams that rely on motion, instinct, or platform reporting alone.